Nicolas Cuperlier, professor-researcher at the ETIS laboratory and head of the Neurocybernetics team, reveals the progress of the MpNav project.

This innovative technology offers a new approach in autonomous vehicle navigation. Inspired by the cognitive mechanisms of mammals, MpNav is distinguished by its neuronal architecture with a simple approach, requiring only a simple webcam and a laptop. This project paves the way for a variety of applications, especially in environments where GPS may sometimes be inoperative.

Focus on this disruptive technology that could transform our daily lives and the challenges that it still faces in order to reach its full maturity.

Hello Nicolas, could you introduce yourself in a few words?

I'm a teacher-researcher at the ETIS laboratory and I'm in charge of the ETIS teamNeurocyberneticswhich aims to develop more human cognitive-like models and propose more autonomous robotic systems by following a bio-inspired and developmental approach in AI and Robotics. My work is part of a neurorobotic approach that involves embarking into robots, spatial cognition and navigation models resulting from mammalian work in computational neurosciences.

Could you tell us more about the MpNav project and what stage this project is at?

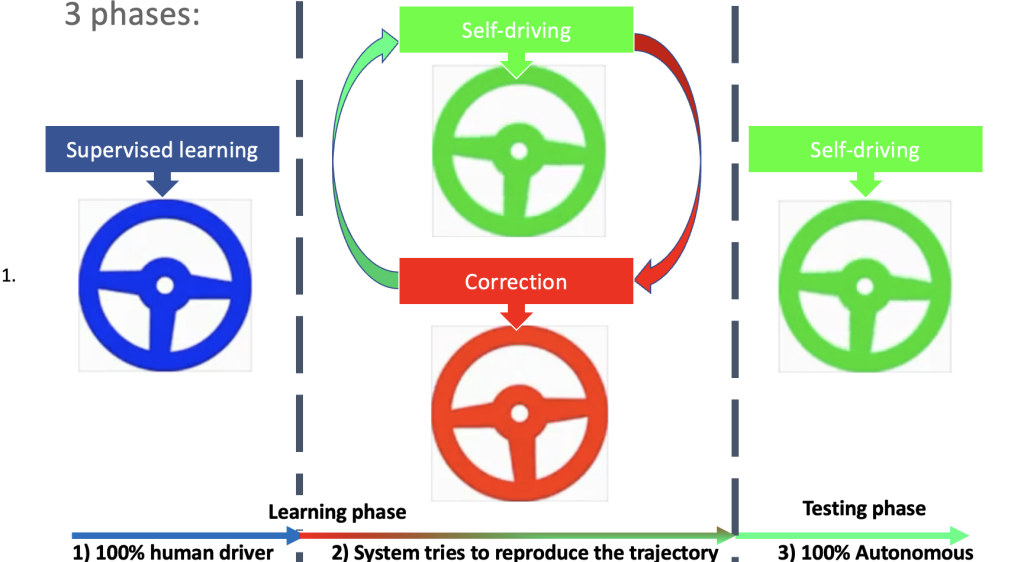

We developed in partnership withITE VEDECOMa control architecture for the navigation of an autonomous vehicle using a simple camera ( webcam type) called MpNav. The model allows to learn how to reproduce, in interaction with a human pilot, trajectories leading the vehicle from a point of departure to its destination.

MpNav is an innovation of rupture with a neuronal architecture inspired by the mammalian brain. This approach, known as neurorobotics, differs from conventional approaches in robotics or those based on techniques such as deep learning. The technology proposed in MPNAV is disruptive on the one hand because it is based on a type of space representation very different from what is used in classical robotics, and on the other hand it is part of a frugal, low-data-consumption and energy-intensive approach to AI, for example, it requires only a simple webcam and a laptop to operate. It therefore does not require expensive lidar or large GPU calculation cards. Two types of complementary learning take place simultaneously: a quick (in 1 stroke) learning of the visual scene to allow the vehicle to locate itself (learning visual places) and a second, incremental learning this time, by imitation of the driver in order to learn the desired route by linking the recognized place at each moment with a direction.

The applications targeted could be parking in large car parks (or so-called urban canyon areas: where the GPS works less well), or frequent trajectories such as moving home/workplace...

Currently this technology is at a TRL level between 3 and 4.

How is this technology valued?

This work is the result of several theses carried out in collaboration with the VEDECOM website since 2016. In 2022, at the end of the thesis of S. Colomer which allowed the first evaluations on real vehicle, we started the procedures for the filing of a common patentCYU/ VEDECOM. Then we were able to continue this project in pre-maturation by the bias of the pre-maturation program of Sci-Ty which we were awarded. This allowed us to recruit an engineer for a year to advance on aspects of simulation testing automation in order to complete our analysis of the robustness of the model (different weather, etc.).

We were also asked to contribute to Sci-Ty's White Paper on Sci-Ty's Mobility Strategy in the form of a cross-testimony with 2 other winners of the same programme.

Presentations were made of the MpNav project (without disclosure of technical aspects) at various events, such as the Techs-days organised by thePUI CY Transferor on the occasion of a field committee 2 of VEDECOMITE.

Thanks to Nicolas Cuperlier.

Want to know more?

Visit the ETIS laboratory website.